八、Statefulset控制器 8.1 statefulset简介

从前面的学习我们知道使用Deployment创建的pod是无状态的,当挂载了Volume之后,如果该pod挂了,Replication Controller会再启动一个pod来保证可用性,但是由于pod是无状态的,pod挂了就会和之前的Volume的关系断开,新创建的Pod无法找到之前的Pod。但是对于用户而言,他们对底层的Pod挂了是没有感知的,但是当Pod挂了之后就无法再使用之前挂载的存储卷。

为了解决这一问题,就引入了StatefulSet用于保留Pod的状态信息。

StatefulSet是为了解决有状态服务的问题(对应Deployments和ReplicaSets是为无状态服务而设计),其应用场景包括:

1、稳定的持久化存储,即Pod重新调度后还是能访问到相同的持久化数据,基于PVC来实现

2、稳定的网络标志,即Pod重新调度后其PodName和HostName不变,基于Headless Service(即没有Cluster IP的Service)来实现

3、有序部署,有序扩展,即Pod是有顺序的,在部署或者扩展的时候要依据定义的顺序依次依次进行(即从0到N-1,在下一个Pod运行之前所有之前的Pod必须都是Running和Ready状态),基于init containers来实现

4、有序收缩,有序删除(即从N-1到0)

5、有序的滚动更新

statefulset组成部分:

Headless Service(无头服务)用于为Pod资源标识符生成可解析的DNS记录。

volumeClaimTemplates (存储卷申请模板)基于静态或动态PV供给方式为Pod资源提供专有的固定存储。

StatefulSet,用于管控Pod资源。

8.2 为什么要有headless

在deployment中,每一个pod是没有名称,是随机字符串,是无序的。而statefulset中是要求有序的,每一个pod的名称必须是固定的。当节点挂了,重建之后的标识符是不变的,每一个节点的节点名称是不能改变的。pod名称是作为pod识别的唯一标识符,必须保证其标识符的稳定并且唯一。 为了实现标识符的稳定,这时候就需要一个headless service 解析直达到pod,还需要给pod配置一个唯一的名称

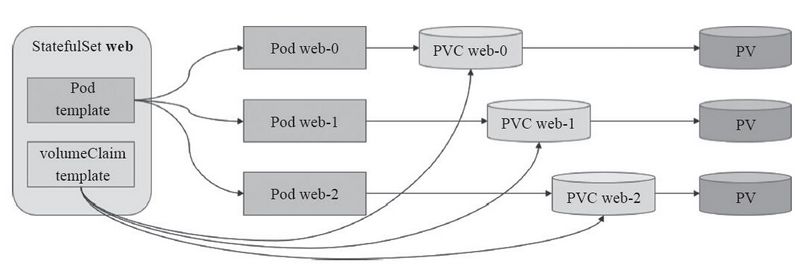

8.3 为什么要 有volumeClainTemplate 大部分有状态副本集都会用到持久存储,比如分布式系统来说,由于数据是不一样的,每个节点都需要自己专用的存储节点。而在deployment中pod模板中创建的存储卷是一个共享的存储卷,多个pod使用同一个存储卷,而statefulset定义中的每一个pod都不能使用同一个存储卷,由此基于pod模板创建pod是不适应的,这就需要引入volumeClainTemplate,当在使用statefulset创建pod时,会自动生成一个PVC,从而请求绑定一个PV,从而有自己专用的存储卷。Pod名称、PVC和PV关系图如下

8.4 statefulset清单格式 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 [root@k8s-master ~ ] KIND: StatefulSet VERSION: apps/v1 DESCRIPTION: StatefulSet represents a set of pods with consistent identities. Identities are defined as: - Network: A single stable DNS and hostname. - Storage: As many VolumeClaims as requested. The StatefulSet guarantees that a given network identity will always map to the same storage identity. FIELDS: apiVersion <string> kind <string> metadata <Object> spec <Object> status <Object> [root@k8s-master ~ ] KIND: StatefulSet VERSION: apps/v1 RESOURCE: spec <Object> DESCRIPTION: Spec defines the desired identities of pods in this set. A StatefulSetSpec is the specification of a StatefulSet. FIELDS: podManagementPolicy <string> replicas <integer> revisionHistoryLimit <integer> selector <Object> -required- serviceName <string> -required- template <Object> -required- updateStrategy <Object> volumeClaimTemplates <[]Object>

8.5 statefulset使用演示

在创建StatefulSet之前需要准备的东西,值得注意的是创建顺序非常关键,创建顺序如下: 1、Volume 2、Persistent Volume 3、Headless Service 4、StatefulSet Volume可以有很多种类型,比如nfs、glusterfs等,我们这里使用的ceph RBD来创建。

@ 创建nfs共享目录并生成测试首页文件

1 2 [root@hdp01 /]$ mkdir /data/volumes/v{1..5} -p [root@hdp01 /]$ for i in `seq 5`;do echo "/data/volumes/v$i index.html" > /data/volumes/v$i /index.html;done

①创建pv资源清单并应用

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 [root@k8s-master statefulset ] apiVersion: v1 kind: PersistentVolume metadata: name: pv-01 labels: type: ssd-pv spec: accessModes: - ReadWriteOnce - ReadWriteMany capacity: storage: 2Gi nfs: server: 192.168 .1 .21 path: /data/volumes/v1 --- apiVersion: v1 kind: PersistentVolume metadata: name: pv-02 labels: type: ssd-pv spec: accessModes: - ReadWriteOnce - ReadWriteMany capacity: storage: 2Gi nfs: server: 192.168 .1 .21 path: /data/volumes/v2 --- apiVersion: v1 kind: PersistentVolume metadata: name: pv-03 labels: type: ssd-pv spec: accessModes: - ReadWriteOnce - ReadWriteMany capacity: storage: 2Gi nfs: server: 192.168 .1 .21 path: /data/volumes/v3 --- apiVersion: v1 kind: PersistentVolume metadata: name: pv-04 labels: type: ssd-pv spec: accessModes: - ReadWriteOnce - ReadWriteMany capacity: storage: 2Gi nfs: server: 192.168 .1 .21 path: /data/volumes/v4 --- apiVersion: v1 kind: PersistentVolume metadata: name: pv-05 labels: type: ssd-pv spec: accessModes: - ReadWriteOnce - ReadWriteMany capacity: storage: 2Gi nfs: server: 192.168 .1 .21 path: /data/volumes/v5 [root@k8s-master statefulset ] [root@k8s-master statefulset ] NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE pv-01 2Gi RWO,RWX Retain Available 19m pv-02 2Gi RWO,RWX Retain Available 19m pv-03 2Gi RWO,RWX Retain Available 19m pv-04 2Gi RWO,RWX Retain Available 19m pv-05 2Gi RWO,RWX Retain Available 19m

②创建statefulset资源清单,定义headless Service与volumeClaimTemplates

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 [root@k8s-master statefulset]$ cat sts.yaml apiVersion: v1 kind: Service metadata: name: sts-nginx-svc labels: app: sts-nginx-svc spec: ports: - port: 80 name: web clusterIP: None selector: app: sts-nginx --- apiVersion: apps/v1 kind: StatefulSet metadata: name: statefulset-nginx spec: selector: matchLabels: app: sts-nginx serviceName: "sts-nginx-svc" replicas: 3 template: metadata: labels: app: sts-nginx spec: containers: - name: nginx01 image: hub.nnv5.cn/test/myapp:v2 ports: - containerPort: 80 name: web volumeMounts: - name: www mountPath: /usr/share/nginx/html/nfs volumeClaimTemplates: - metadata: name: www spec: accessModes: [ "ReadWriteOnce" ] resources: requests: storage: 2Gi [root@k8s-master statefulset]$ kubectl apply -f sts.yaml

③查看验证信息

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 [root@k8s-master statefulset] NAME READY STATUS RESTARTS AGE pod/statefulset-nginx-0 1/1 Running 0 2m11s 10.244.2.59 k8s-node02.nnv5.cn <none> <none> pod/statefulset-nginx-1 1/1 Running 0 27m 10.244.1.54 k8s-node01.nnv5.cn <none> <none> pod/statefulset-nginx-2 1/1 Running 0 26m 10.244.2.58 k8s-node02.nnv5.cn <none> <none> NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 7d22h service/sts-nginx-svc ClusterIP None <none> 80/TCP 11m NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE persistentvolumeclaim/www-statefulset-nginx-0 Bound pv-01 2Gi RWO,RWX 11m persistentvolumeclaim/www-statefulset-nginx-1 Bound pv-02 2Gi RWO,RWX 11m persistentvolumeclaim/www-statefulset-nginx-2 Bound pv-03 2Gi RWO,RWX 11m NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE persistentvolume/pv-01 2Gi RWO,RWX Retain Bound default/www-statefulset-nginx-0 25m persistentvolume/pv-02 2Gi RWO,RWX Retain Bound default/www-statefulset-nginx-1 25m persistentvolume/pv-03 2Gi RWO,RWX Retain Bound default/www-statefulset-nginx-2 25m persistentvolume/pv-04 2Gi RWO,RWX Retain Available 25m persistentvolume/pv-05 2Gi RWO,RWX Retain Available 25m [root@k8s-master statefulset] /data/volumes/v1 index.html [root@k8s-master statefulset] /data/volumes/v2 index.html [root@k8s-master statefulset] /data/volumes/v3 index.html [root@k8s-master statefulset] NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES statefulset-nginx-0 1/1 Running 0 5m26s 10.244.2.59 k8s-node02.nnv5.cn <none> <none> statefulset-nginx-1 1/1 Running 0 30m 10.244.1.54 k8s-node01.nnv5.cn <none> <none> statefulset-nginx-2 1/1 Running 0 30m 10.244.2.58 k8s-node02.nnv5.cn <none> <none> [root@k8s-master statefulset] pod "statefulset-nginx-1" deleted [root@k8s-master statefulset] NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES statefulset-nginx-0 1/1 Running 0 15m 10.244.2.59 k8s-node02.nnv5.cn <none> <none> statefulset-nginx-1 1/1 Running 0 2s 10.244.1.55 k8s-node01.nnv5.cn <none> <none> statefulset-nginx-2 1/1 Running 0 40m 10.244.2.58 k8s-node02.nnv5.cn <none> <none> [root@k8s-master statefulset] /data/volumes/v2 index.html

8.6 滚动更新、扩缩容、版本升级、修改更新策略 1.滚动更新

RollingUpdate 更新策略在 StatefulSet 中实现 Pod 的自动滚动更新。 当StatefulSet的 .spec.updateStrategy.type 设置为 RollingUpdate 时,默认为:RollingUpdate。StatefulSet 控制器将在 StatefulSet 中删除并重新创建每个 Pod。 它将以与 Pod 终止相同的顺序进行(从最大的序数到最小的序数),每次更新一个 Pod。 在更新其前身之前,它将等待正在更新的 Pod 状态变成正在运行并就绪。如下操作的滚动更新是有2-0的顺序更新

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 [root@k8s-master statefulset] ...... image: hub.nnv5.cn/test/myapp:v4 ...... [root@k8s-master statefulset]$ kubectl apply -f sts.yaml service/sts-nginx-svc unchanged statefulset.apps/statefulset-nginx configured [root@k8s-node01 opt]$ kubectl get pods -o wide -w statefulset-nginx-2 1/1 Terminating 0 74m 10.244.2.58 k8s-node02.nnv5.cn <none> <none> statefulset-nginx-2 0/1 ContainerCreating 0 0s <none> k8s-node02.nnv5.cn <none> <none> statefulset-nginx-2 1/1 Running 0 2s 10.244.2.60 k8s-node02.nnv5.cn <none> <none> statefulset-nginx-1 1/1 Terminating 0 34m 10.244.1.55 k8s-node01.nnv5.cn <none> <none> statefulset-nginx-1 0/1 ContainerCreating 0 0s <none> k8s-node01.nnv5.cn <none> <none> statefulset-nginx-1 1/1 Running 0 2s 10.244.1.57 k8s-node01.nnv5.cn <none> <none> statefulset-nginx-0 1/1 Terminating 0 50m 10.244.2.59 k8s-node02.nnv5.cn <none> <none> statefulset-nginx-0 0/1 ContainerCreating 0 0s <none> k8s-node02.nnv5.cn <none> <none> statefulset-nginx-0 1/1 Running 0 2s 10.244.2.61 k8s-node02.nnv5.cn <none> <none>

在创建的每一个Pod中,每一个pod自己的名称都是可以被解析的,如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 [root@k8s-master secret] nslookup: can't resolve ' (null)': Name does not resolve Name: statefulset-nginx-0.sts-nginx-svc.default.svc.cluster.local Address 1: 10.244.2.61 statefulset-nginx-0.sts-nginx-svc.default.svc.cluster.local [root@k8s-master secret]# kubectl exec -it statefulset-nginx-0 -- nslookup statefulset-nginx-1.sts-nginx-svc.default.svc.cluster.local nslookup: can' t resolve '(null)' : Name does not resolveName: statefulset-nginx-1.sts-nginx-svc.default.svc.cluster.local Address 1: 10.244.1.57 statefulset-nginx-1.sts-nginx-svc.default.svc.cluster.local [root@k8s-master secret] nslookup: can't resolve ' (null)': Name does not resolve Name: statefulset-nginx-2.sts-nginx-svc.default.svc.cluster.local Address 1: 10.244.2.60 statefulset-nginx-2.sts-nginx-svc.default.svc.cluster.local

2.扩缩容 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 [root@k8s-master secret]$ kubectl scale statefulset statefulset-nginx --replicas=4 statefulset.apps/statefulset-nginx scaled [root@k8s-node01 opt]$ kubectl get pods -o wide -w NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES busybox-6f99677459-qhjpd 1/1 Running 0 27m 10.244.1.56 k8s-node01.nnv5.cn <none> <none> statefulset-nginx-0 1/1 Running 0 7m39s 10.244.2.61 k8s-node02.nnv5.cn <none> <none> statefulset-nginx-1 1/1 Running 0 7m43s 10.244.1.57 k8s-node01.nnv5.cn <none> <none> statefulset-nginx-2 1/1 Running 0 7m47s 10.244.2.60 k8s-node02.nnv5.cn <none> <none> statefulset-nginx-3 0/1 Pending 0 0s <none> <none> <none> <none> statefulset-nginx-3 0/1 ContainerCreating 0 2s <none> k8s-node01.nnv5.cn <none> <none> statefulset-nginx-3 1/1 Running 0 4s 10.244.1.58 k8s-node01.nnv5.cn <none> <none> [root@k8s-master secret]$ kubectl get pvc,pv NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE persistentvolumeclaim/www-statefulset-nginx-0 Bound pv-01 2Gi RWO,RWX 86m persistentvolumeclaim/www-statefulset-nginx-1 Bound pv-02 2Gi RWO,RWX 86m persistentvolumeclaim/www-statefulset-nginx-2 Bound pv-03 2Gi RWO,RWX 86m persistentvolumeclaim/www-statefulset-nginx-3 Bound pv-04 2Gi RWO,RWX 3m27s NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE persistentvolume/pv-01 2Gi RWO,RWX Retain Bound default/www-statefulset-nginx-0 99m persistentvolume/pv-02 2Gi RWO,RWX Retain Bound default/www-statefulset-nginx-1 99m persistentvolume/pv-03 2Gi RWO,RWX Retain Bound default/www-statefulset-nginx-2 99m persistentvolume/pv-04 2Gi RWO,RWX Retain Bound default/www-statefulset-nginx-3 99m persistentvolume/pv-05 2Gi RWO,RWX Retain Available 99m [root@k8s-master secret]$ kubectl scale statefulset statefulset-nginx --replicas=2 statefulset.apps/statefulset-nginx scaled [root@k8s-node01 opt]$ kubectl get pods -o wide -w statefulset-nginx-3 1/1 Terminating 0 5m32s 10.244.1.58 k8s-node01.nnv5.cn <none> <none> statefulset-nginx-3 0/1 Terminating 0 5m33s 10.244.1.58 k8s-node01.nnv5.cn <none> <none> statefulset-nginx-3 0/1 Terminating 0 5m45s 10.244.1.58 k8s-node01.nnv5.cn <none> <none> statefulset-nginx-3 0/1 Terminating 0 5m45s 10.244.1.58 k8s-node01.nnv5.cn <none> <none> statefulset-nginx-2 1/1 Terminating 0 14m 10.244.2.60 k8s-node02.nnv5.cn <none> <none> statefulset-nginx-2 0/1 Terminating 0 14m 10.244.2.60 k8s-node02.nnv5.cn <none> <none> statefulset-nginx-2 0/1 Terminating 0 14m 10.244.2.60 k8s-node02.nnv5.cn <none> <none> statefulset-nginx-2 0/1 Terminating 0 14m 10.244.2.60 k8s-node02.nnv5.cn <none> <none> [root@k8s-master secret]$ kubectl get pvc,pv NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE persistentvolumeclaim/www-statefulset-nginx-0 Bound pv-01 2Gi RWO,RWX 92m persistentvolumeclaim/www-statefulset-nginx-1 Bound pv-02 2Gi RWO,RWX 92m persistentvolumeclaim/www-statefulset-nginx-2 Bound pv-03 2Gi RWO,RWX 92m persistentvolumeclaim/www-statefulset-nginx-3 Bound pv-04 2Gi RWO,RWX 9m28s NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE persistentvolume/pv-01 2Gi RWO,RWX Retain Bound default/www-statefulset-nginx-0 105m persistentvolume/pv-02 2Gi RWO,RWX Retain Bound default/www-statefulset-nginx-1 105m persistentvolume/pv-03 2Gi RWO,RWX Retain Bound default/www-statefulset-nginx-2 105m persistentvolume/pv-04 2Gi RWO,RWX Retain Bound default/www-statefulset-nginx-3 105m persistentvolume/pv-05 2Gi RWO,RWX Retain Available 105

3. 灰度更新pod版本

修改更新策略,以partition方式进行更新,更新值为5,只有myapp编号大于等于5的才会进行更新(假如我sts一共有5个pod,我将partition设置为4,只有pod编号大于等于4的pod才会被更新,也就是只会更新编号为4的pod,后期想全部更新的时候将partition设置为0即可更新全部pod)。类似于金丝雀部署方式。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 kubectl patch statefulset statefulset-nginx -p '{"spec":{"updateStrategy":{"type":"RollingUpdate","rollingUpdate":{"partition":4}}}}' [root@k8s-master statefulset] statefulset.apps/statefulset-nginx image updated [root@k8s-master secret]$ kubectl get pods -w NAME READY STATUS RESTARTS AGE busybox-6f99677459-qhjpd 1/1 Running 0 59m statefulset-nginx-0 1/1 Running 0 39m statefulset-nginx-1 1/1 Running 0 39m statefulset-nginx-2 1/1 Running 0 8m20s statefulset-nginx-3 1/1 Running 0 8m18s statefulset-nginx-4 1/1 Running 0 5s statefulset-nginx-4 1/1 Terminating 0 13s statefulset-nginx-4 0/1 Pending 0 0s statefulset-nginx-4 0/1 ContainerCreating 0 0s statefulset-nginx-4 1/1 Running 0 2s [root@k8s-node01 opt] - image: hub.nnv5.cn/test/myapp:v1 [root@k8s-node01 opt] - image: hub.nnv5.cn/test/myapp:v4 [root@k8s-node01 opt] - image: hub.nnv5.cn/test/myapp:v4 [root@k8s-node01 opt] - image: hub.nnv5.cn/test/myapp:v4 [root@k8s-node01 opt] - image: hub.nnv5.cn/test/myapp:v4 [root@k8s-master statefulset]$ kubectl patch statefulset statefulset-nginx -p '{"spec":{"updateStrategy":{"type":"RollingUpdate","rollingUpdate":{"partition":0}}}}' statefulset.apps/statefulset-nginx patched [root@k8s-master secret] NAME READY STATUS RESTARTS AGE busybox-6f99677459-qhjpd 1/1 Running 0 64m statefulset-nginx-0 1/1 Running 0 44m statefulset-nginx-1 1/1 Running 0 44m statefulset-nginx-2 1/1 Running 0 13m statefulset-nginx-3 1/1 Running 0 13m statefulset-nginx-4 1/1 Running 0 5m5s statefulset-nginx-3 1/1 Terminating 0 13m statefulset-nginx-3 0/1 Pending 0 0s statefulset-nginx-3 0/1 ContainerCreating 0 0s statefulset-nginx-3 1/1 Running 0 1s statefulset-nginx-2 1/1 Terminating 0 13m statefulset-nginx-2 0/1 Pending 0 0s statefulset-nginx-2 0/1 ContainerCreating 0 0s statefulset-nginx-2 1/1 Running 0 2s statefulset-nginx-1 1/1 Terminating 0 45m statefulset-nginx-1 0/1 Pending 0 0s statefulset-nginx-1 0/1 ContainerCreating 0 0s statefulset-nginx-1 1/1 Running 0 1s statefulset-nginx-0 1/1 Terminating 0 45m statefulset-nginx-0 0/1 Pending 0 0s statefulset-nginx-0 0/1 ContainerCreating 0 0s statefulset-nginx-0 1/1 Running 0 2s [root@k8s-node01 opt] - image: hub.nnv5.cn/test/myapp:v1 [root@k8s-node01 opt] - image: hub.nnv5.cn/test/myapp:v1 [root@k8s-node01 opt] - image: hub.nnv5.cn/test/myapp:v1 [root@k8s-node01 opt] - image: hub.nnv5.cn/test/myapp:v1 [root@k8s-node01 opt] - image: hub.nnv5.cn/test/myapp:v1